3.1 Weight, paths, connectedness, and clustering

3.1.1 Weighted network

So far, we have assumed that the weight of all links in a network is the same. However, networks can be weighted. Take social network as an example. Although you are connected to many people, the weight of these connections is unequal. You probably talk to your family and your close friends more often than to your acquaintances. In an adjacency matrix, suppose you are represented by node i, your mom node j and one of your acquaintances node m. Also suppose the weight of the link between you and your mom is 10, which is ten times the weight of the link between you and the acquaintance. Then we have Aij=10 and Aim=1.

3.1.2 Shortest path, network diameter, and average path length

3.1.2.1 Shortest path

In the real world, objects have physical distances between each other, and that’s why we need to fly for three hours from New York to Florida. In networks, physical distances are replaced by path lengths: how many links does the path contain?

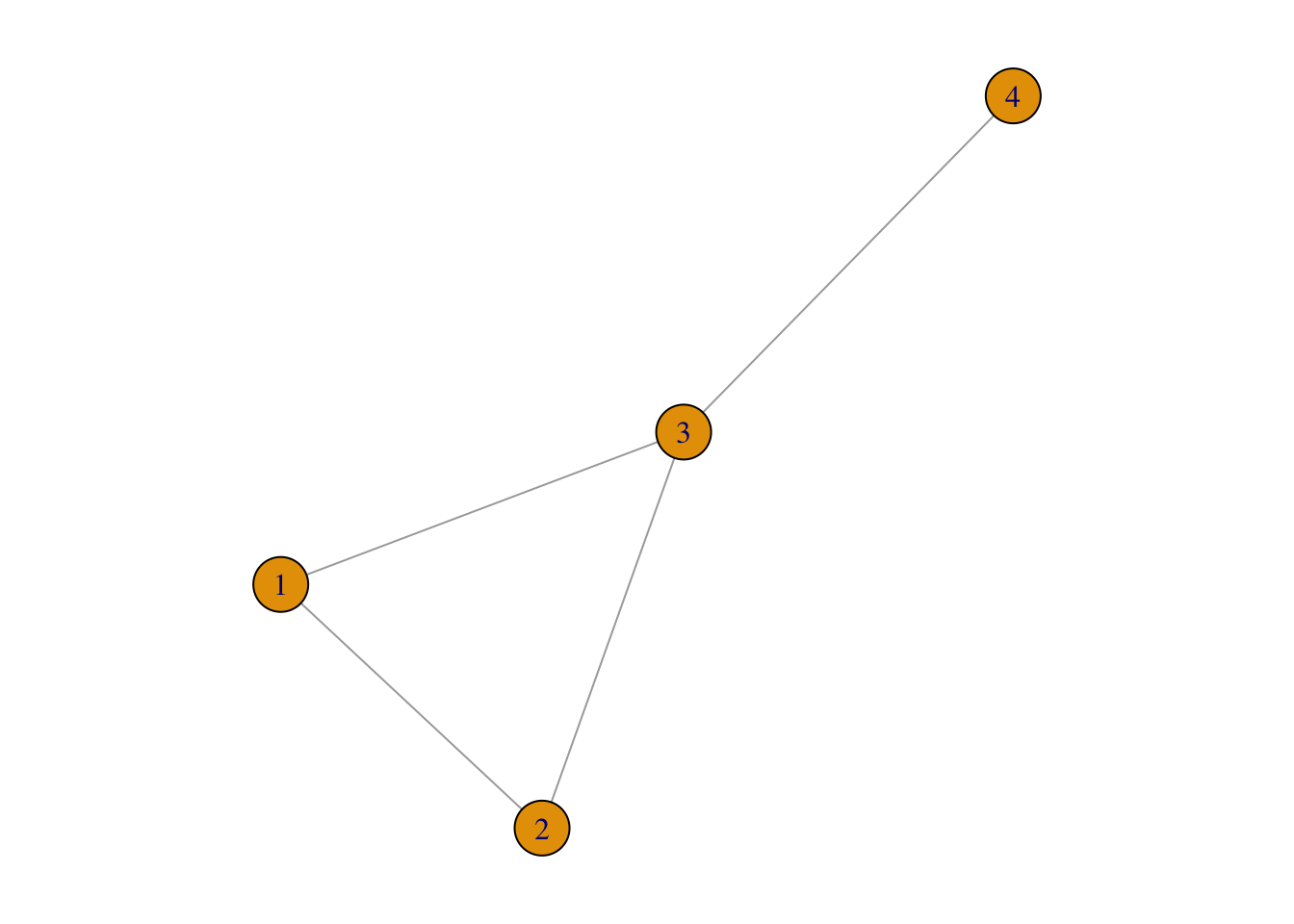

For example, in g1, the path length between node 1 and node 4 can either be 2 (1→3→4) or 3 (1→2→3→4):

par(mar = c(1, 1, 1, 1))

plot(g1)

Obviously, the path length of 2 is smaller than 3. In this case, 1→3→4 is the shortest path (denoted as di,j), which is defined as the path with the fewest number of links. In the above example, d1,4 = 2.

In an undirected network, di,j=dj,k. This often does not hold true in a directed network because:

The existence of (i,j) does not guarantee the existence of (j,i);

Even if both (i,j) and (j,i), the two often are unequal to each other.

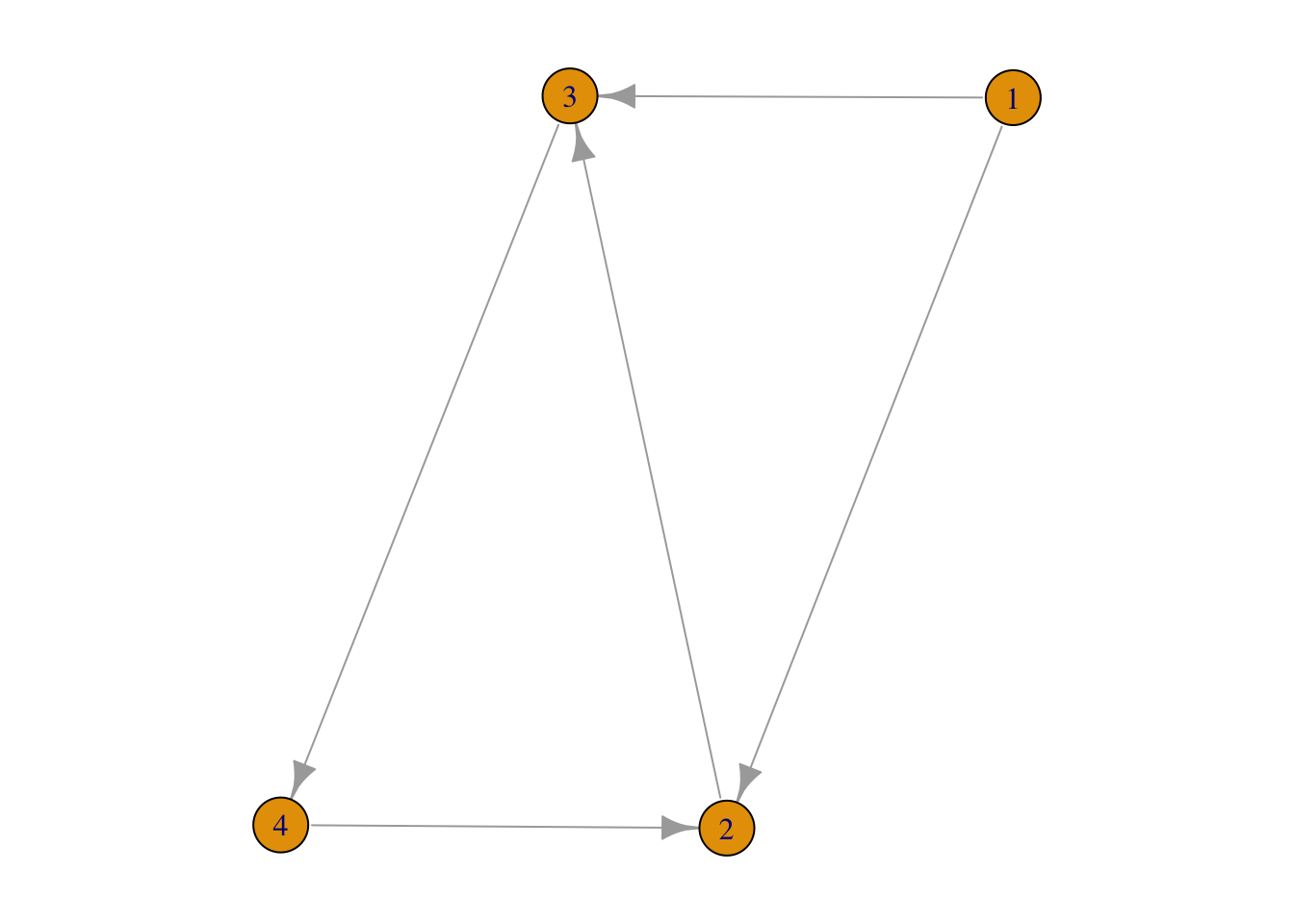

For example,

g3 <- graph( edges=c(1,2, 1,3, 2,3, 3,4, 4,2), n=4, directed=TRUE)

par(mar = c(1, 1, 1, 1))

plot(g3)

FIGURE 3.1: shortest paths in a directed network

There are paths (1→2, and 1→3→4→2) from node 1 to node 2 but we don’t have on any from node 2 to node 1. Furthermore, d2,4=2 whereas d4,2=1.

3.1.2.2 Network diameter

Among all the shortest paths in a network, the longest one is called the diameter of this network, denoted as dmax. In the above Network 3.1, dmax=2: d1,4=d2,4=2.

3.1.2.3 Average path length

Average path length is defined as the average length of shortest paths between any two nodes. For example, in Network 3.1, d1,2=d1,3=d2,3=d3,4=d4,2=1, d1,4=d2,4=d3,2=d4,3=2. Therefore, the average is (1⋅5+2⋅4)5+4=1.44. Lets test it:

average.path.length(g3)## [1] 1.4444443.1.2.4 Breath-first search (BFS) algorithm

As the size of a network gets larger, it will be more difficult to know what is the shortest path between a pair of nodes. For example, without using computers,

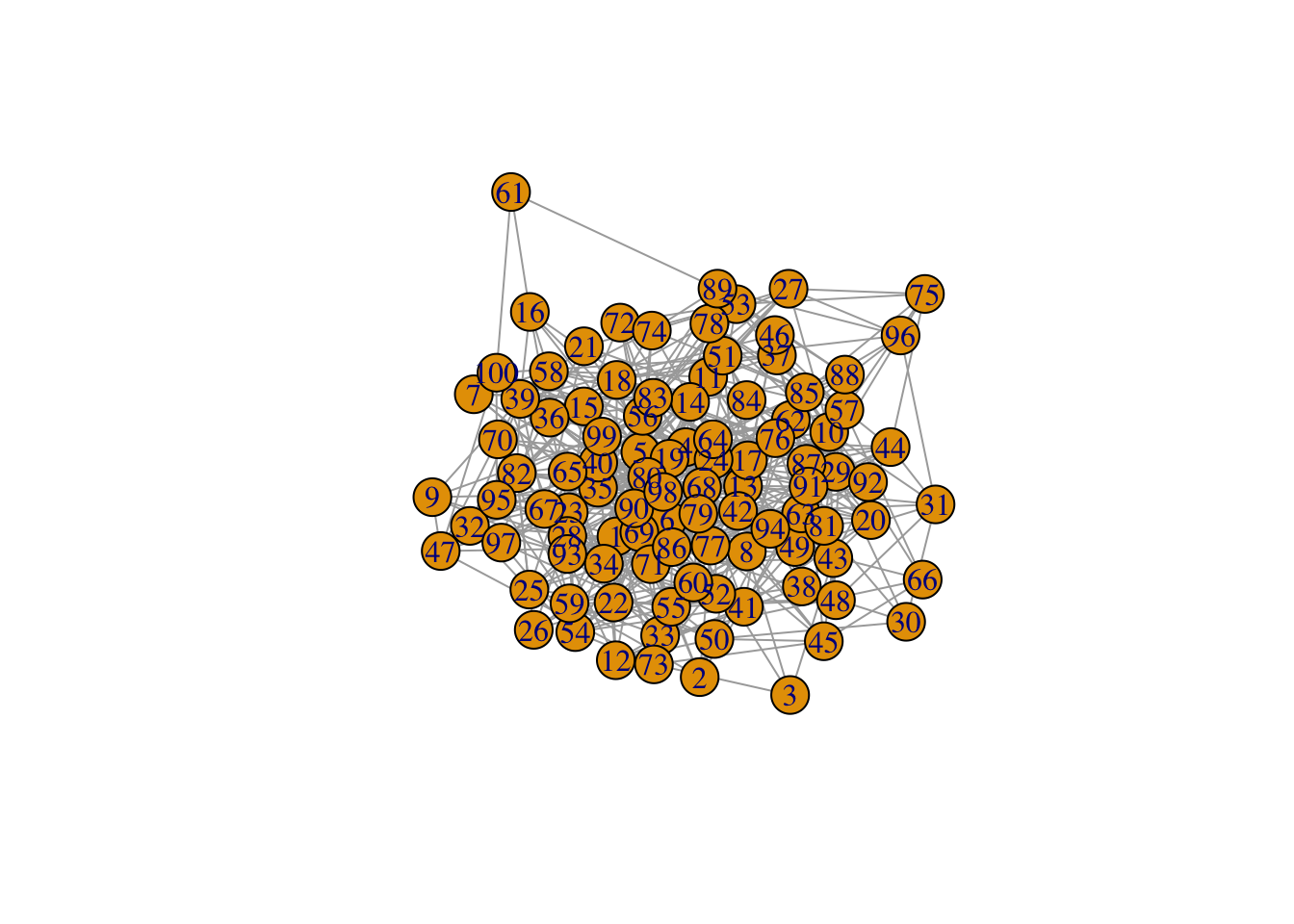

For example, in the network @ref9fig:random3-10 below, what is the shortest path from node 3 to node 90?

set.seed(42)

random3 <- erdos.renyi.game(100, 0.1, type = "gnp")

plot(random3)

FIGURE 3.2: A random network to explain Breath-first search (BFS) algorithm

We are not even sure whether the two nodes are connected or not, let alone knowing the shortest path. Of course, computers can do this very fast:

shortest_paths(random3, from = 3, to = 90)## $vpath

## $vpath[[1]]

## + 4/100 vertices, from a45c575:

## [1] 3 8 28 90

##

##

## $epath

## NULL

##

## $predecessors

## NULL

##

## $inbound_edges

## NULLFrom the result, we know that d3,90=3. The path is: 3→8→28→90. But how did the computer find this path?

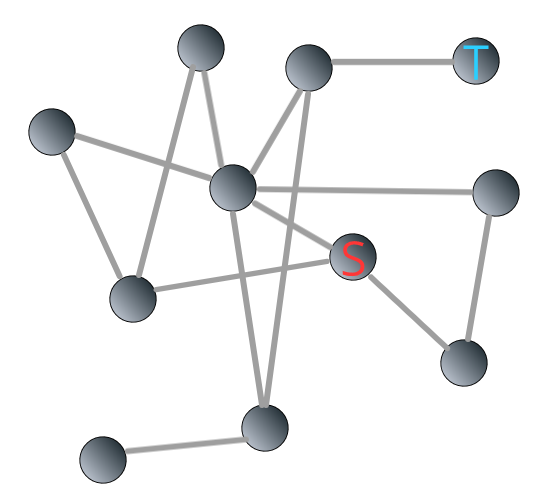

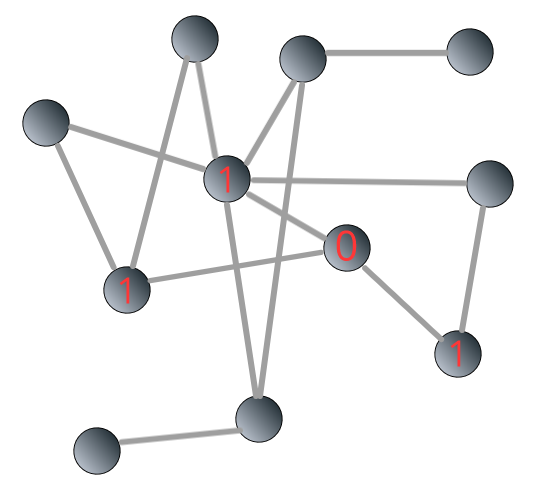

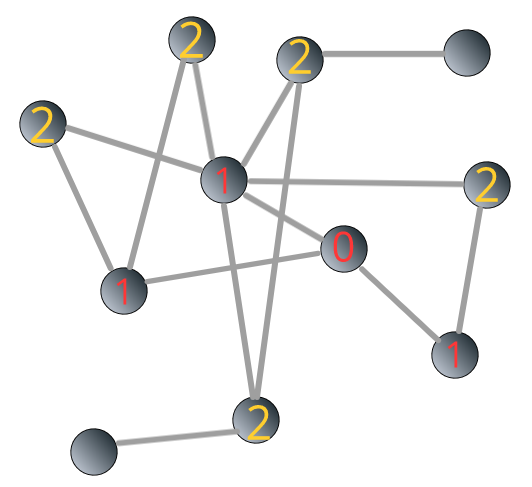

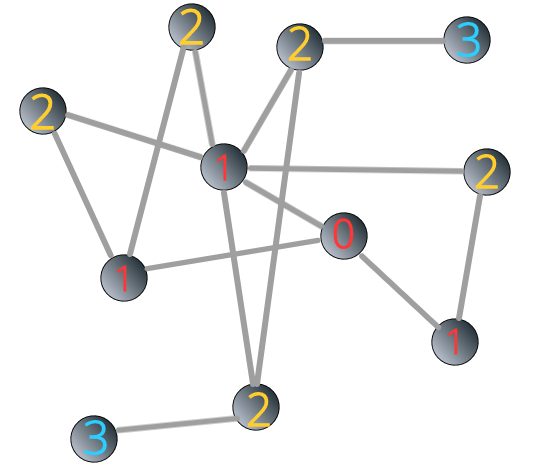

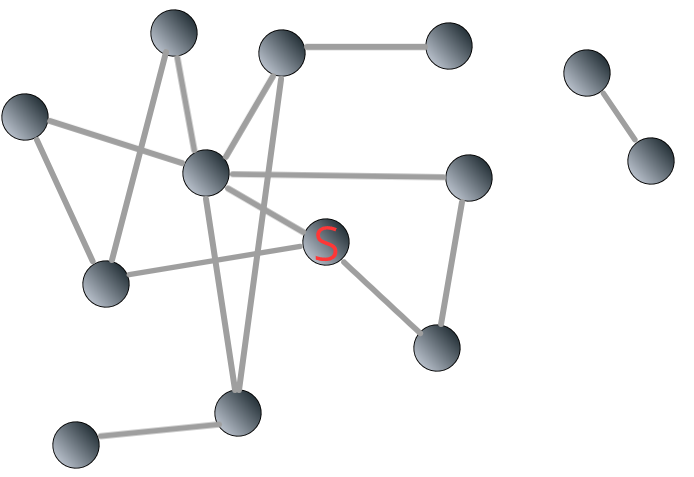

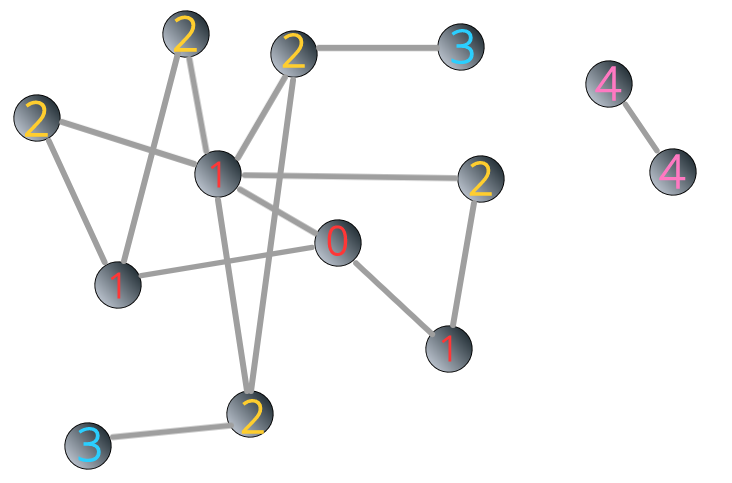

The most frequently used algorithm to find the shortest path between two nodes is called “Breadth-First Search (BFS).” It starts from the “source” node, which is labeled as 0. Neighbors of the “source” node is then labeled as 1, then the neighbors’ neighbors, until it reaches the “target” node.

For example, we are interested in knowing the shortest path from the source node (“S”) to the target (“T”) in the following Network 3.3:

FIGURE 3.3: Illustrating breadth-first search algorithm: source and target

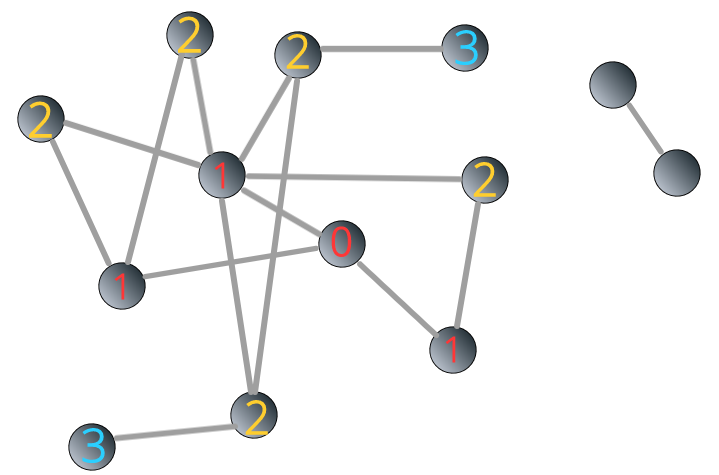

The breadth-first search algorithm is shown in Figure 3.4 below:

FIGURE 3.4: Illustrating breadth-first search algorithm: steps

We can see that the shortest path from the “source” to the “target” is 3.

3.1.3 Connectedness

In a disconnected network, subnetworks are called components or clusters. A bridge can connect two components.

For a large network, there are two ways to find out whether it consists of components:

Using linear algebra to look at the network’s adjacency matrix could be rearranged into a block diagonal;

Using BSF algorithm.

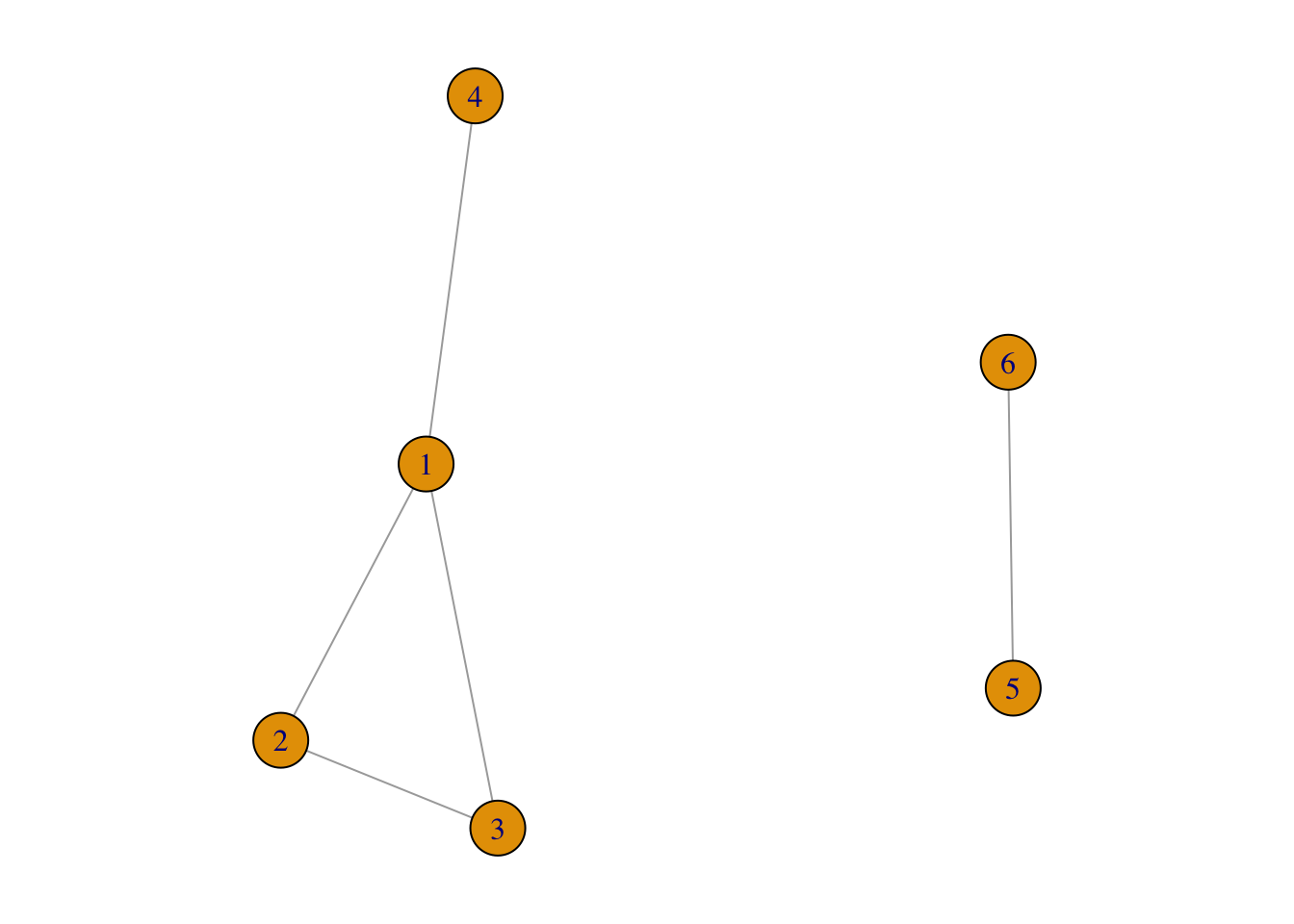

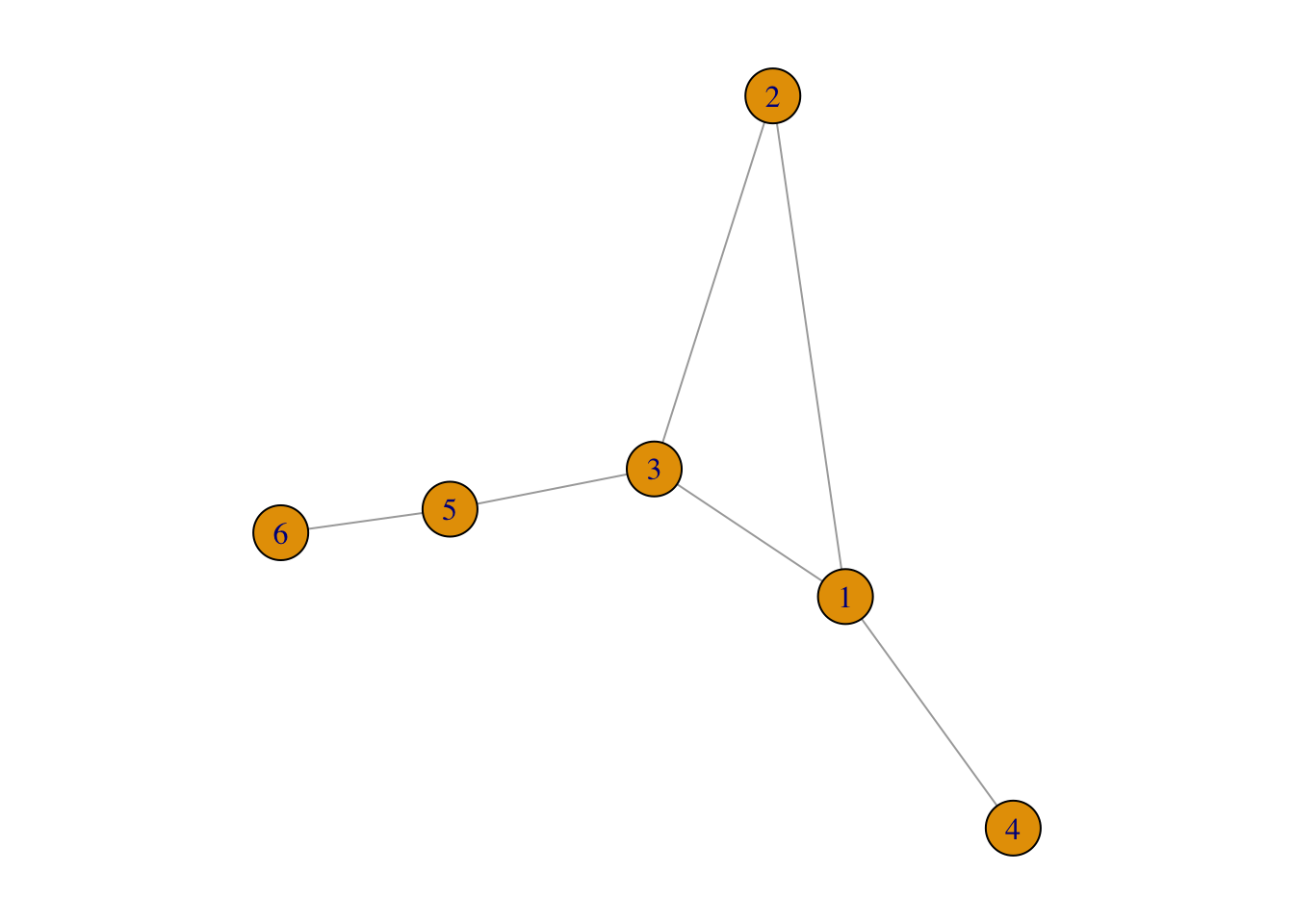

For example, in Figure 3.5, the network contains two components:

disconnectedG <- graph( edges=c(1,2, 1,3, 2,3, 1,4, 5,6),

n=6,

directed=FALSE)

par(mar = c(1, 1, 1, 1))

plot(disconnectedG)

FIGURE 3.5: An example of disconnected network

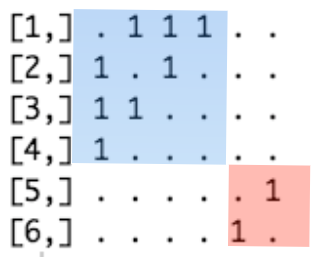

In its adjacency matrix, nonzero elements are contained in square blocks along the diagonal and all the remaining elements are zeros:

FIGURE 3.6: Adjacency matrix of the disconnected network

If the components are bridged:

connectedG <- graph( edges=c(1,2, 1,3, 2,3, 1,4, 3,5, 5,6), n=6,

directed=FALSE)

par(mar = c(1, 1, 1, 1))

plot(connectedG)

FIGURE 3.7: An example of connected network

Then its adjacency matrix cannot be rearranged in a block diagonal form:

## 6 x 6 sparse Matrix of class "dgCMatrix"

##

## [1,] . 1 1 1 . .

## [2,] 1 . 1 . . .

## [3,] 1 1 . . 1 .

## [4,] 1 . . . . .

## [5,] . . 1 . . 1

## [6,] . . . . 1 .Then how can we find whether a network is connected or disconnected using BFS algorithm?

It’s very easy. In a connected network, we can reach all the nodes from the “source node.” Therefore, the number of labeled nodes is equal to the size of the network, N. However, in a disconnected network, nodes in different components cannot be reached, so the number of labeled nodes is smaller than N.

To illustrate, let’s go back to the network 3.3. This time, we add a component consisting two nodes to it.

FIGURE 3.8: A disconnected network composed of two components

We apply BFS algorithm to the network 3.8. The starting point is the same as above. You can see that the process ceased when the label reaches 3:

FIGURE 3.9: BFS stops in a disconnected network

How can we identify the remaining component(s)? In the example above, we assign label 4 to a randomly unlabeled node j and all the other nodes reachable from node j.

FIGURE 3.10: Identifying components in a disconnected network through BFS algorithm

What if there are multiple components? It’s the same as the above step. After labeling all reachable nodes, if the number of labeled nodes is still smaller than N, then we assign label 5 to a randomly unlabeled node m, and all other nodes reachable from node m. The process goes on until the number of labeled nodes is equal to N.

3.1.4 Clustering coefficient

To what degree are a node’s neighbors connected with each other? That’s what clustering coefficient measures.

If the degree of node i is ki, then node i’s local clustering coefficient is defined as

Ci=Li÷ki⋅(ki−1)2=2⋅Liki⋅(ki−1)

Li represents the actual number of links between node i’s ki neighbors, and ki⋅(ki−1)2 means the highest number of links possible between those neighbors.

Local clustering coefficient of a node is the probability that two neighbors of a given node has a link to each other. It measures the local density of links in a network.

Local clustering coefficient is for nodes. If we are interested in the degree of clustering for a network, we need to calculate average clustering coefficient, denoted as ⟨C⟩ which is simply the average of local clustering coefficient of all the nodes in a given network:

⟨C⟩=1N⋅N∑i=1Ci